Abstract

Dynamic events can be regarded as long-term temporal objects, which

are characterized by spatio-temporal features at multiple temporal scales.

Based on this, we design a simple statistical distance measure between video

sequences (possibly of different lengths) based on their behavioral content.

This measure is non-parametric and can thus handle a wide range of dynamic

events. We use this measure for isolating and clustering events within long

continuous video sequences. This is done without prior knowledge of the

types of events, their models, or their temporal extent. An outcome of such

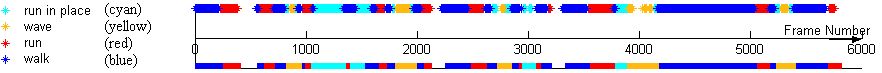

a clustering process is a temporal segmentation of long video sequences

into event-consistent sub-sequences, and their grouping into event-consistent

clusters.

Our event representation and associated distance measure can

also be used for event-based indexing into long video sequences, even when

only one short example-clip is available. However, when multiple example-clips

of the same event are available (either as a result of the clustering process,

or given manually), these can be used to refine the event representation,

the associated distance measure, and accordingly the quality of the detection

and clustering process.

Click here for full paper

ZelnikIrani.CVPR2001.ps.zip

(5591K) or ZelnikIrani.CVPR2001.pdf

(678K)

Full length sequence (54022K)

Clustering actions of a tennis player

Punch-Kick-Duck Sequence

Action Based Video

Synthesis

Raw Footage (punch-duck-kick).

Classifying dynamic textures

Questions and comments should be addressed to:

lihi@wisdom.weizmann.ac.il