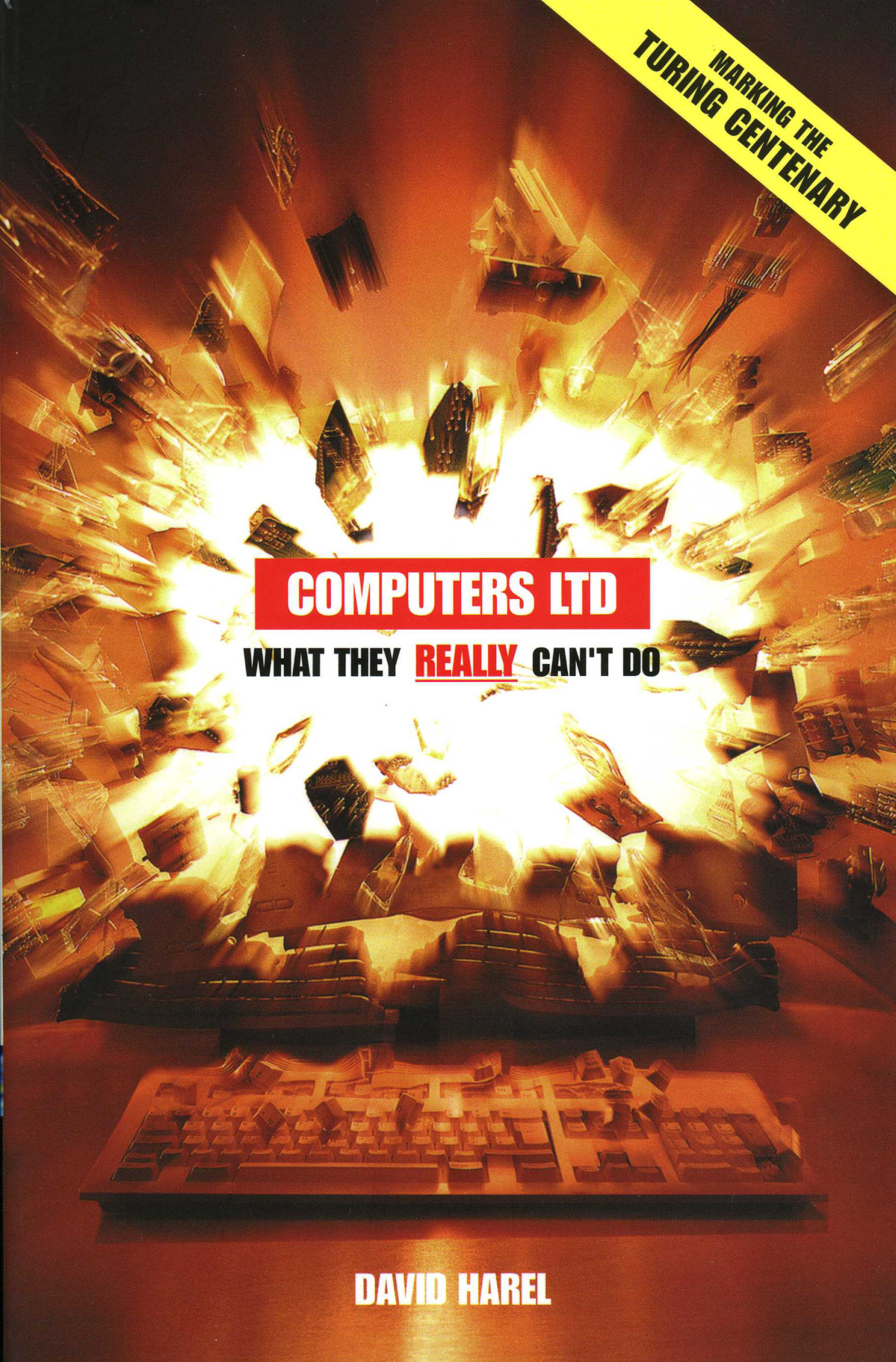

D. Harel, Computers Ltd.: What They Really Can't Do, Oxford University Press, September 2000.

Revised paperback edition, 2003; Special printing with new preface for the Turing Centennial year, 2012. (German , 2002; Polish, 2002; Italian, 2002; Chinese, 2003; Hebrew, 2004.)

|

|

Table of Contents |

- What's it all about?

Algorithms

Basic instructions

The text vs. the process

Inputs

What do algorithms solve?

Isn't our setup too simplistic?

Solving algorithmic problems

Programming

Errors and correctness

Termination

- Sometimes we can't do it

Finite problems are solvable

The tiling problems

Do we really mean it?

Elementary computing devices

The Church/Turing thesis

Computability is robust

Unboundedness is misleading

Program verification

The halting problem

Some problems are even worse!

- Sometimes we can't afford to do it

Resources: time and memory space

Improving running time

Upper and lower bounds

So what?

The towers of Hanoi

The good, the bad, and the ugly

Intractability

Roadblocks and chess

Problems that are even harder

Unreasonably large memory

- Sometimes we just don't know

The monkey puzzle

NP-complete problems

Finding short paths

Scheduling and matching

More on puzzles

Coloring networks

Magic coins

Standing or falling together

The great mystery: Is P equal to NP?

Can we come close?

Sometimes we succeed

- Trying to ease the pain

Parallelism, or joining forces

Fixed vs. expanding parallelism

Can parallelism eliminate the bad news?

More unknowns in parallel computation

Randomization, or tossing coins

More on Monte Carlo algorithms

Testing for primality

Randomized primality testing

Can randomization eliminate the bad news?

Can computers simulate true randomness?

Quantum computing

Quantum algorithms

Can there be a quantum computer?

Molecular computing

- Turning bad into good

Classical cryptography

Public-key cryptography

Signing messages

Can all this be made to work?

The RSA Cryptosystem

Interactive proofs

Zero-knowledge proofs

I can 3-color a network

On millionaires, ballots and more

- Are we ourselves any better?

Algorithmic intelligence?

The Turing test

ELIZA and zupchoks

Heuristics

Representing knowledge

Understanding natural language

In conclusion

In 1984, TIME magazine ran a cover story on computer software. In the otherwise excellent article, the editor of a certain software magazine was quoted as saying:

"Put the right kind of software into a computer, and it will do whatever you want it to. There may be limits on what you can do with the machines themselves, but there are no limits on what you can do with software."

Wrong. Totally wrong. In fact, a simple way of summarizing this book, is to say that it is devoted to describing and explaining the facts that refute --- no, shatter! --- this claim.

Of course, computers are incredible. They are without doubt the most important invention of the 20th century, having dramatically and irrevocably changed the way we live, and mostly for the better. But that is the good news, and good news is what most computer books are about. This book concentrates on the bad, on the negative side of things.

Computers are expensive, which is already bad news. They frustrate us: programming them is laborious and using them can be difficult; they are seductive, luring us away from more important things; they err; they crash; they contract viruses; and on and on. But it is not these kinds of bad news that concern us here. The goal of the book is to explain and illustrate one of the most important and fundamental facets of the world of computing --- its inherent limitations.

Typically, when people have difficulties bending computers to their will, their excuses fall into three categories: insufficient money, insufficient time, and insufficient brains. Being richer, the argument goes, could buy us larger and more sophisticated computers, supported by better software; being younger or having a longer life-span would enable us to wait longer for time-consuming programs to terminate; and being smarter could lead to solutions that we don't seem able to find. These are strong and valid points, and we are not about to contest them: a more generous supply of any of these three commodities could indeed take us a long way. However, for the most part, our book is not about these kinds of hardships either. It concentrates on bad news that is proven, lasting and robust, concerning problems that computers are not able to solve, regardless of our hardware, software, talents or patience. And when we say "proven", we mean really proven; that is, mathematically, and not just experimentally.

Why are we interested in bad news? Shouldn't computer scientists be spending their time making things smaller, faster, easier, more accessible and more powerful? Well, they should, and the vast majority of us actually do. But even so, starting in the 1930s, and increasingly so by the year, many researchers have been working hard to better understand the other side of the coin, that of humbling the computer, by discovering and better understanding its inherent weaknesses.

The motivation for this quest is four-fold:

To satisfy intellectual curiosity. Just as physicists want to determine the ultimate confines of the universe or the constraints imposed by the laws of physics, so do computer scientists want to discover what can be computed and what cannot, and how costly it is when it can.To discourage futile efforts. Many people, among them knowledgeable computer experts, try to tackle computational problems that happen to be subject to devastating bad news. The more we understand these problems and their inherent nature, the less we shall waste our time and energy.

To encourage development of new paradigms. Parallelism, randomization, heuristics, and quantum computing, four of the most promising and exciting topics in computer science research, would not be developing the way they are without the push resulting from the bad news.

To make possible the otherwise impossible. To make possible the impossible?! This is surely paradoxical. How on earth can we hope to profit from bad news? Well, to keep up suspense until Chapter 6, we shall only remark here that this is an unexpected aspect of our story, but also a surprisingly beneficial one.

So much for motivation. As to the nature of the bad news we discuss, consider the large body of very exciting work aimed at endowing computers with human-like intelligence. In its wake, a host of questions arise concerning the limits of computation, such a whether computers can run companies, carry out medical diagnosis, compose music or fall in love. While promising, and often quite amazing, progress has been made in addressing these issues (not very much on the last one, however), these questions are posed in an imprecise and vague manner. With the exception of the last chapter of the book, we are not interested in them here. In contrast, we concentrate on precisely defined computational problems, that come complete with clear-cut objectives. This, in turn, makes it possible to make equally clear-cut statements about whether or not they can be solved satisfactorily.

The issues we discuss are not debatable, and do not involve philosophical, quasi-scientific arguments. Rather, we concentrate on hard facts, rigorously stated and mathematically proved. You don't go looking for triangles whose angles add up to 150 degrees or 200 degrees --- although no-one has ever been able to inspect each and every triangle --- simply because there is a proof that no such triangles exist*. In a similar way, if a computational problem has been proved to admit no solution, and we shall discuss such problems, then seeking a solution is pointless. The same goes for problems that do have solutions, but have been proved to require wholly unreasonably large computers (say, much larger than the entire known universe) or to take wholly unreasonable amounts of computation time (say, a lot more than the time since the Big Bang), and we shall discuss many of these too.

*Planar ones, of course. On a spherical or almost spherical surface,

such as the planet Earth, the sum of the angles of a triangle is in fact

greater than 180 degrees.

By and large, people are unaware of the issues this book addresses. Sadly and surprisingly, this is true also for many members of the computing profession itself, as the quote from TIME shows. This is really unfortunate. If the bad news were some esoteric, recently discovered phenomenon, not having come across it could be understood. The truth is that some parts of our story have been known for almost 70 years, long before real computers were built, and most of the others have been steadily unfolding for the last 30.

To a large extent, the blame is on us, researchers in computer science. We have done far too little in exposing, exemplifying, illustrating, and making comprehensible the basics of our science in general, and its negative facets in particular. This leaves the public in general blissfully unstirred, free to follow with awe the technological advances in hardware and software, to delight in the excitement of new applications, and to gloat over the futuristic possibilities raised by instantaneous telecommunication, multimedia, virtual reality, artificial intelligence, and the internet revolution.

There is no reason to break up the party. We should continue to strive for bigger and better things. But even so, a measure of humility is in place: computers are not omnipotent --- far from it. Moreover, the problem is real, and it is here to stay.

New preface for the Turing Centennial

This book was first published in hardcover in 2000. The paperback edition, published in 2003 and reprinted in 2004 with corrections, was dedicated to Alan M. Turing. The preface you are now reading accompanies a special 2012 reprint of the book, published in honor of Turing's centennial year.

Alan Mathison Turing (b. 23 June 1912, d. 7 June 1954) will, in all probability, be remembered as one of the thinkers with the greatest impact ever, and is definitely one of the most important scientists of the 20th century. This is not the appropriate place to describe in any detail Turing's contributions to computing and to humanity in general. The interested reader can find lots of relevant material in the many articles and books written about him. However, in terms of the topic of this book, it would not be an exaggeration to say that Turing is the grand ancestor of the limitations of computing. Turing's insights from the mid-1930s, alongside the work of Alonzo Church and others, formed the foundations of our understanding that the general notion of computing, and actual computers in particular, are severely limited, which is what this book is all about. Turing's name is associated with both the Church-Turing thesis and the Turing machine ? two of the most basic notions discussed in the book.

Another of Turing's fundamental contributions to computer science revolves around his deep insights into what later became known as artificial intelligence (the person who coined the term, John McCarthy, passed away in late 2011). Turing's test for computerized artificial intelligence is also central to the book and is discussed in detail towards its end, in Chapter 7.

As is well-known, Turing was also instrumental in the code-breaking efforts in the World War II, and most notable is his work on the Enigma code. Chapter 6 is devoted to cryptography, and although Turing's work is not mentioned there explicitly, it played a classical and crucial part in the development of the field. Turing also carried out pioneering work on morphogenesis and the way patterns are formed in the process. In modern terms this work would be considered part of systems biology or bioinformatics, topics that are not discussed in the present book.

On a more personal level, but without getting into any details here, I would like to add that large parts of my own research in the last 38 years can be viewed as very modest continuations and extensions of the work of Turing. Thus, to a large extent I am but a dwarf standing on the shoulders of a true giant; the true giant of our field. I am thus extremely happy that Oxford University Press has agreed to publish this new printing of Computers Ltd. As part of the Turing centennial. For me, this is a true celebration by any measure!

In terms of the contents of the book, the decision has been not to change the text at all except for some minor typographical corrections. The main reason has to do with the fact that when I was planning the book, the criterion for which topics to include centered around whether or not they would remain fundamental and not change radically in a period of, say, 10 to 20 years. A dozen years later it seems that no major changes have to be made. None of the central open problems discussed in the book have been resolved, none of the basic notions underlying the topics in the various chapters has undergone a major modification, and very few of the new notions that have been defined since 2000 seems to deserve a place alongside the fundamental ones that are included. Thus, even had we decided to come out with a revised edition rather than a new printing, the text would have undergone only relatively minor changes. In the next few paragraphs, I will briefly mention a few of the relevant things that have happened in the last few years (thanks to Uri Feige for helping me compile this list). The reader can read these now or come back to them after reading the book itself.

Towards the end of Chapter 4 there is a discussion of approximating a network coloring. In recent years, strong new results have been proved, culminating in the fact that approximating a coloring by any constant (not just the 50% factor mentioned in the text) is also as hard as solving the P=NP problem.

The discussion of parallelism in the first part of Chapter 5 could benefit from mentioning the added importance it garners from the recent centrality of the internet and cloud computing, and perhaps most significantly multiple-core computers. New techniques for dealing with these technologies are surfacing as we speak (e.g., the map-reduce method for large distributed computation). Thus, parallelism is becoming an even more central and crucial topic in computer science, so that it is probably fair to say that resolving the open problems in this area has become a lot more urgent.

As to randomness, the second topic of Chapter 5, there has been a lot of interesting research done in recent years, much of it around the random classes RP and BPP and their connections to pseudo-random number generators. In fact, in a footnote in Chapter 5 I wrote that many researchers believe that RP is strictly larger than PTIME. That may still be true, but in recent years you will also find many who don't.

The third topic of Chapter 5 is quantum computing. Here the main thing to mention is the existence of larger quantum computers. The text mentions that at the time of publication the largest quantum computer actually built consisted of seven qubits. This number has grown steadily in recent years, and while the jury isn't in on the exact current number (among other things this has to do with whether one has a general purpose or special purpose machine), a company called D-Wave Systems has been working recently with 128-qubit chips, and is said to be developing a 512-qubit one. What relevance this will have to the fundamental issues of quantum computation discussed in the text remains to be seen.

The last chapter of the book, Chapter 7, on artificial intelligence and heuristics, is the one that could really do with a facelift. While the main issues raised there still stand strong (e.g., passing the Turing test is still nowhere in sight), there have been several exciting developments. These include significant improvements in computerized chess, and great improvements in natural language understanding and translation. One well-known example is IBM's Watson machine, which plays Jeopardy at the level of the top human contestants, and in the process exhibits an impressive ability to "understand" highly ambiguous language and deal with situations that have long been associated exclusively with human talent. In general the tools underlying heavy-duty artificial intelligence applications are becoming more powerful, such as powerful SAT solvers, which are very successful in practice, though in the worst case they don't do as well.

Finally, I would like to add that one of the most impressive and potentially revolutionary uses of computer science in the last 10-15 years has been in the life sciences. True, bioinformatics has been around for longer than that, but the use of deep techniques from algorithmics and system and software engineering in systems biology and the modeling and analysis of biological systems has grown by an order of magnitude in recent years. I share with others the opinion and belief that computer science will play a role in the science of the 21st century (which is poised to be the century of the life sciences) similar to the role played by mathematics in the physical sciences of the 20th century. Thus, chances are that the subject matter of this book will only become more relevant and of more interest.

David Harel, Rehovot; March, 2012