Viewpoint-Aware Object Detection and Pose Estimation

Daniel Glasner,

Meirav Galun, Sharon Alpert,

Ronen Basri and

Gregory Shakhnarovich

Image and Vision Computing editor's choice paper [DOI] [bibtex]

ICCV11 paper [PDF] [bibtex]

Extended version [PDF]

Benchmark [Weizmann Cars ViewPoint]

ICCV11 short video [HR (23M)] [LR (16M)]

ICCV11 poster [PDF]

Abstract

We describe an approach to category-level detection and viewpoint estimation for

rigid 3D objects from single 2D images. In contrast to many existing methods, we

directly integrate 3D reasoning with an appearance-based voting architecture. Our

method relies on a nonparametric representation of a joint distribution of shape

and appearance of the object class. Our voting method employs a novel parametrization

of joint detection and viewpoint hypothesis space, allowing efficient accumulation

of evidence. We combine this with a re-scoring and refinement mechanism, using an

ensemble of view-specific Support Vector Machines. We evaluate the performance of

our approach in detection and pose estimation of cars on a number of benchmark datasets.

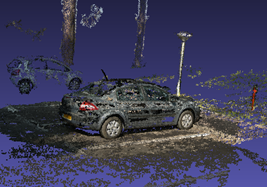

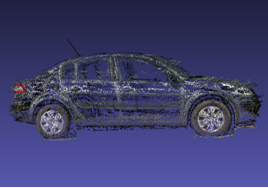

Model construction

We construct the model from multiple sets of car images, some example frames from

two different sequences can be seen in (a). Using Bundler we reconstruct a 3D scene

(b). The car of interest is segmented and aligned (c). Finally a view of the class

model is shown in (d).

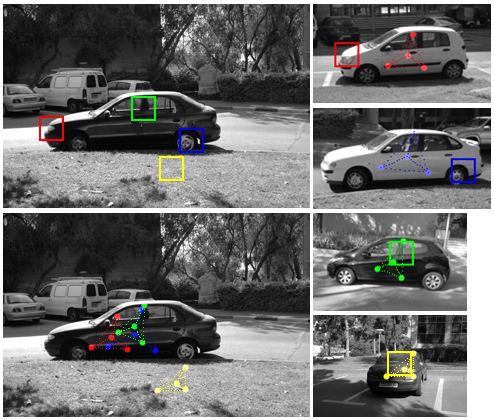

Voting process

Four patches from the test image (top left) are matched to database patches. The

matching patches are shown with the corresponding color on the right column. Each

match generates a vote in 6D pose space. We parameterize a point in pose space as

a projection of designated points in 3D onto the image plane. These projections

are shown here as dotted triangles. The red, green and blue votes correspond to

a true detection, the cast pose votes are well clustered in pose space (bottom left)

while the yellow match casts a false vote.

Results

3Dpose car category

Our detector achieves an average precision of 99.16%. Our average accuracy for pose

estimation is 85.28%.

Pascal VOC 2007 car category

Our detector achieves an average precision of 32.03%. This result is achieved without

using any positive training examples from the Pascal VOC dataset.

Research was supported in part by the Vulcan Consortium funded by the Magnet Program

of the Israeli Ministry of Commerce, Trade and Labor, Chief Scientist Office.

The vision group at the Weizmann Institute is supported in part by the Moross Laboratory

for Vision Research and Robotics.